LangChain

Read the official Document Plan

| classification 1 | classification 2 | PD |

|---|---|---|

| LCEL | Interface | |

| Streaming | ||

| How to | Route between multiple runnables✅ Cancelling requests✅ Use RunnableMaps✅ Add message history (memory) | |

| Cookbook | ✅Prompt + LLM ✅Multiple chains ✅Retrieval augmented generation (RAG) ✅Querying a SQL DB Adding memory ✅Using tools Agents | |

| Model I/O | Quickstart | |

| Concepts | ✅ | |

| Prompts | Quick Start Example selectors Few Shot Prompt Templates Partial prompt templates Composition | |

| LLMs | Quick Start Streaming Caching Custom chat models Tracking token usage Cancelling requests Dealing with API Errors Dealing with rate limits OpenAI Function calling Subscribing to events Adding a timeout | |

| Chat Models | ||

| Output Parsers | ✅ | |

| Retrieval | Home/Concept | |

| Document loaders | ||

| Text Splitters | ||

| Retrievers | ||

| Text embedding models | ||

| Vector stores | ||

| Indexing | ||

| Experimental | ||

| Chains | ✅ | |

| Agents | ||

| More | ||

| Guides | ||

| User cases | SQL | |

| Chatbots | ||

| Q&A with RAG | ||

| Tool use | ||

| Interacting with APIs | ||

| Tabular Question Answering | ||

| Summarization | ||

| Agent Simulations | ||

| Autonomous Agents | ||

| Code Understanding | ||

| Extraction |

LangChain Ecosystem

Advantages: Supports Javascript, which is much better than LllamaIndex (although llamda supports ts, the Document and API are obviously much worse than the Python version)

Ecology:

concept

LLM and Chat Model

Models: Includes two types of LLMs and Chat Models.

import { OpenAI, ChatOpenAI } from "@langchain/openai";

const llm = new OpenAI({

modelName: "gpt-3.5-turbo-instruct",

});

const chatModel = new ChatOpenAI({

modelName: "gpt-3.5-turbo",

});

Anthropic's Model is best suited for XML, while OpenAI's Model is best suited for JSON.

Typescript version

install

npm install langchain @langchain/core @langchain/community @langchain/openai langsmith

LangChain all third-party libraries:links

Quick Start

import { ChatOpenAI } from "@langchain/openai";

async function main() {

const chatModel = new ChatOpenAI({});

let str = await chatModel.invoke("what is LangSmith?");

console.log(str);

}

main();

configuration

Configurable content of OpenAI: seeofficial website

Model Name/Temperature/API Key/BaseURL

import { OpenAI } from "@langchain/openai";

const model = new OpenAI({

modelName: "gpt-3.5-turbo",

temperature: 0.9,

openAIApiKey: "YOUR-API-KEY",

configuration: {

baseURL: "https://your_custom_url.com",

},

});

JSON Pattern

const jsonModeModel = new ChatOpenAI({

modelName: "gpt-4-1106-preview",

maxTokens: 128,

}).bind({

response_format: {

type: "json_object",

},

});

seedefinition

Function Call/Tools

Type 1: tools

Use the latest tools interface

const llm = new ChatOpenAI();

const llmWithTools = llm.bind({

tools: [tool],

tool_choice: tool,

});

const prompt = ChatPromptTemplate.fromMessages([

["system", "You are the funniest comedian, tell the user a joke about their topic."],

["human", "Topic: {topic}"]

])

const chain = prompt.pipe(llmWithTools);

const result = await chain.invoke({ topic: "Large Language Models" });

Specify Parser

import { JsonOutputToolsParser } from "langchain/output_parsers";

const outputParser = new JsonOutputToolsParser();

Type 2: function call

There are two ways:

Function passed in when calling the hour

const result = await model.invoke([new HumanMessage("What a beautiful day!")], {

functions: [extractionFunctionSchema],

function_call: { name: "extractor" },

});

Binding a Function to a Model

The same Model can be reused over time

const model = new ChatOpenAI({ modelName: "gpt-4" }).bind({

functions: [extractionFunctionSchema],

function_call: { name: "extractor" },

});

Define API

there are two ways

const extractionFunctionSchema = {

name: "extractor",

description: "Extracts fields from the input.",

parameters: {

type: "object",

properties: {

tone: {

type: "string",

enum: ["positive", "negative"],

description: "The overall tone of the input",

},

word_count: {

type: "number",

description: "The number of words in the input",

},

chat_response: {

type: "string",

description: "A response to the human's input",

},

},

required: ["tone", "word_count", "chat_response"],

},

};

Using Zod

import { ChatOpenAI } from "@langchain/openai";

import { z } from "zod";

import { zodToJsonSchema } from "zod-to-json-schema";

import { HumanMessage } from "@langchain/core/messages";

const extractionFunctionSchema = {

name: "extractor",

description: "Extracts fields from the input.",

parameters: zodToJsonSchema(

z.object({

tone: z

.enum(["positive", "negative"])

.describe("The overall tone of the input"),

entity: z.string().describe("The entity mentioned in the input"),

word_count: z.number().describe("The number of words in the input"),

chat_response: z.string().describe("A response to the human's input"),

final_punctuation: z

.optional(z.string())

.describe("The final punctuation mark in the input, if any."),

})

),

};

Model I/O

Loader

Retriever (important)

Divided into two classes

- bring their own

- Third Party Ensemble

| Retriever | explain |

|---|---|

| Knowledge Bases for Amazon Bedrock | |

| Chaindesk Retriever | |

| ChatGPT Plugin Retriever | |

| Dria Retriever | |

| Exa Search | |

| HyDE Retriever | |

| Amazon Kendra Retriever | |

| Metal Retriever | |

| Supabase Hybrid Search | |

| Tavily Search API | |

| Time-Weighted Retriever | |

| Vector Store | |

| Vespa Retriever | |

| Zep Retriever |

Similarity: ScoreThreshold

ScoreThreshold is a percentage.

- 1.0 is a complete match

- 0.95 may be about right

import { MemoryVectorStore } from "langchain/vectorstores/memory";

import { OpenAIEmbeddings } from "@langchain/openai";

import { ScoreThresholdRetriever } from "langchain/retrievers/score_threshold";

async function main() {

const vectorStore = await MemoryVectorStore.fromTexts(

[

"Buildings are made out of brick",

"Buildings are made out of wood",

"Buildings are made out of stone",

"Buildings are made out of atoms",

"Buildings are made out of building materials",

"Cars are made out of metal",

"Cars are made out of plastic",

],

[{ id: 1 }, { id: 2 }, { id: 3 }, { id: 4 }, { id: 5 }],

new OpenAIEmbeddings()

);

const retriever = ScoreThresholdRetriever.fromVectorStore(vectorStore, {

minSimilarityScore: 0.95, // Finds results with at least this similarity score

maxK: 100, // The maximum K value to use. Use it based to your chunk size to make sure you don't run out of tokens

kIncrement: 2, // How much to increase K by each time. It'll fetch N results, then N + kIncrement, then N + kIncrement * 2, etc.

});

const result = await retriever.getRelevantDocuments(

"building is made out of atom"

);

console.log(result);

};

main();

// [

// Document {

// pageContent: 'Buildings are made out of atoms',

// metadata: { id: 4 }

// }

// ]

Self-Querying (very good, suitable for Query of structured Data)

Supabase

Parser

| 解析器 | 说明 | |

|---|---|---|

| common | String output parser | |

| formatted | Structured output parser | Easy customization |

| OpenAI Tools | 常用 | |

| standard format | JSON Output Functions Parser | in common use |

| HTTP Response Output Parser | ||

| XML output parser | ||

| list | List parser | in common use |

| Custom list parser | 常用 | |

| other | Datetime parser | be of use |

| Auto-fixing parser |

Multiple chains

serial

two patterns

.pipeRunnableSequence.from([])

Use.pipe

const prompt = ChatPromptTemplate.fromMessages([

["human", "Tell me a short joke about {topic}"],

]);

const model = new ChatOpenAI({});

const outputParser = new StringOutputParser();

const chain = prompt.pipe(model).pipe(outputParser);

const response = await chain.invoke({

topic: "ice cream",

});

Use RunnableSequence.from

const model = new ChatOpenAI({});

const promptTemplate = PromptTemplate.fromTemplate(

"Tell me a joke about {topic}"

);

const chain = RunnableSequence.from([

promptTemplate,

model

]);

const result = await chain.invoke({ topic: "bears" });

Batch and parallel

LCEL itself supports

const chain = promptTemplate.pipe(model);

await chain.batch([{ topic: "bears" }, { topic: "cats" }])

Use RunnableMap

const model = new ChatAnthropic({});

const jokeChain = PromptTemplate.fromTemplate(

"Tell me a joke about {topic}"

).pipe(model);

const poemChain = PromptTemplate.fromTemplate(

"write a 2-line poem about {topic}"

).pipe(model);

const mapChain = RunnableMap.from({

joke: jokeChain,

poem: poemChain,

});

const result = await mapChain.invoke({ topic: "bear" });

branch

two patterns

- RunnableBranch

- Custom factory function

Abort, retry, Fallback

N/A

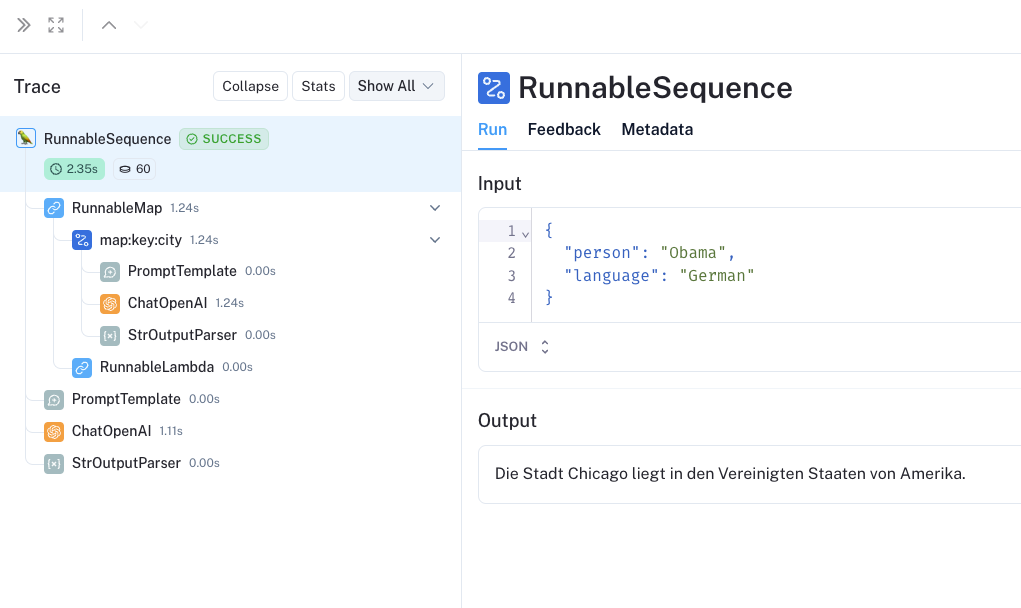

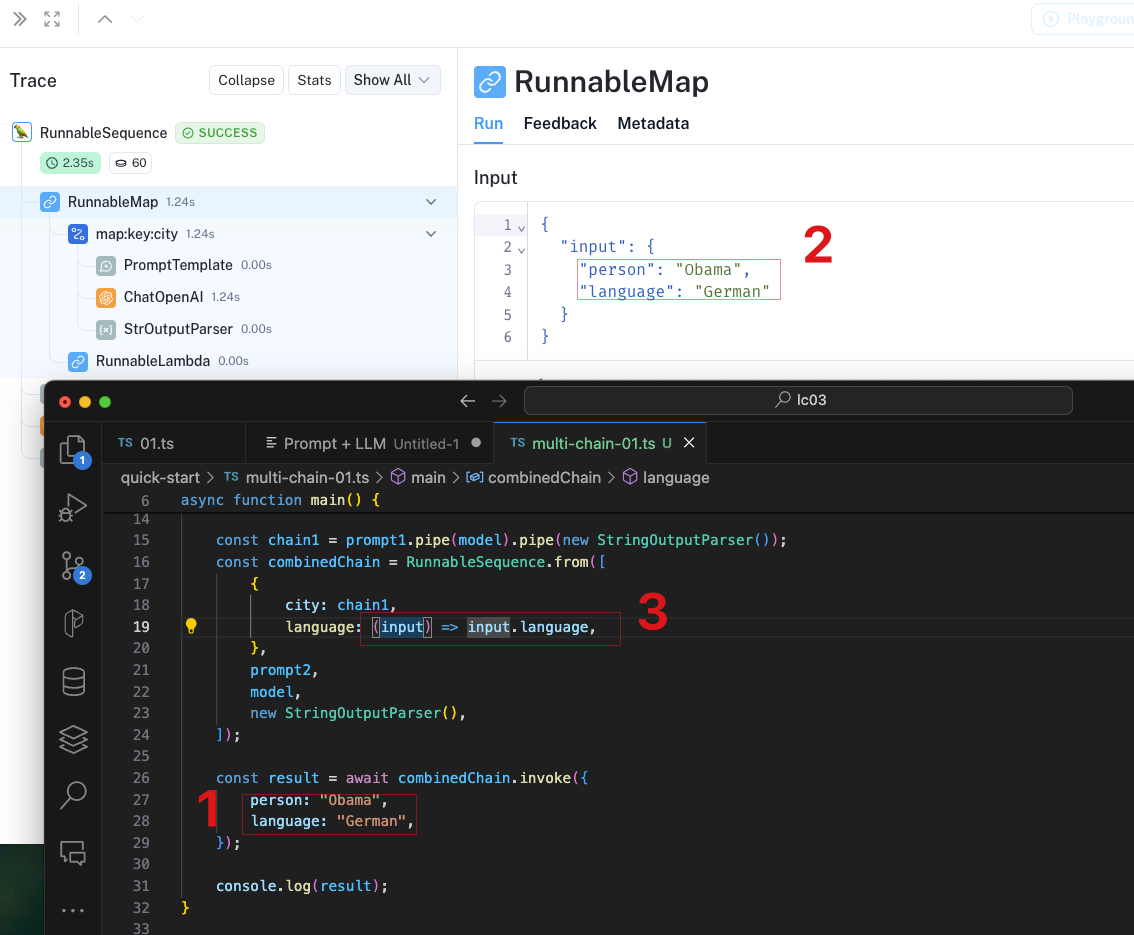

Typical example: Serial

import { PromptTemplate } from "@langchain/core/prompts";

import { RunnableSequence } from "@langchain/core/runnables";

import { StringOutputParser } from "@langchain/core/output_parsers";

import { ChatOpenAI } from "@langchain/openai";

async function main() {

const prompt1 = PromptTemplate.fromTemplate(

`What is the city {person} is from? Only respond with the name of the city.`

);

const prompt2 = PromptTemplate.fromTemplate(

`What country is the city {city} in? Respond in {language}.`

);

const model = new ChatOpenAI({});

const chain1 = prompt1.pipe(model).pipe(new StringOutputParser());

const combinedChain = RunnableSequence.from([

{

city: chain1,

language: (input) => input.language,

},

prompt2,

model,

new StringOutputParser(),

]);

const result = await combinedChain.invoke({

person: "Obama",

language: "German",

});

console.log(result);

}

main();

See the resultshere

RAG

Load/Loader/ETL

| classification | project | |

|---|---|---|

| local resources | Folders with multiple files ChatGPT files CSV files Docx files EPUB files JSON files JSONLines files Notion markdown export Open AI Whisper Audio PDF files PPTX files Subtitles Text files Unstructured | |

| Web resource | Cheerio Puppeteer Playwright Apify Dataset AssemblyAI Audio Transcript Azure Blob Storage Container Azure Blob Storage File College Confidential Confluence Couchbase Figma GitBook GitHub Hacker News IMSDB Notion API PDF files Recursive URL Loader S3 File SearchApi Loader SerpAPI Loader Sitemap Loader Sonix Audio Blockchain Data YouTube transcripts |

More general ELT tool:unstructured

resolution

Python version

InstallLangChain family bucket

pip install langchain langchain-community langchain-core "langserve[all]" langchain-cli langsmith langchain-openai

Latest version number:0.2.6 (as of July 3, 2024 annum month day)

Hub

LangSmith has a Hub, similar to Github.

For example, RLM

import { UnstructuredDirectoryLoader } from "langchain/document_loaders/fs/unstructured";

import { RecursiveCharacterTextSplitter } from "langchain/text_splitter";

import { MemoryVectorStore } from "langchain/vectorstores/memory"

import { OpenAIEmbeddings, ChatOpenAI } from "@langchain/openai";

import { pull } from "langchain/hub";

import { ChatPromptTemplate } from "@langchain/core/prompts";

import { StringOutputParser } from "@langchain/core/output_parsers";

import { createStuffDocumentsChain } from "langchain/chains/combine_documents";

async function main() {

const options = {

apiUrl: "http://localhost:8000/general/v0/general",

};

const loader = new UnstructuredDirectoryLoader(

"sample-docs",

options

);

const docs = await loader.load();

// console.log(docs);

const vectorStore = await MemoryVectorStore.fromDocuments(docs, new OpenAIEmbeddings());

const retriever = vectorStore.asRetriever();

const prompt = await pull<ChatPromptTemplate>("rlm/rag-prompt");

const llm = new ChatOpenAI({ modelName: "gpt-3.5-turbo", temperature: 0 });

const ragChain = await createStuffDocumentsChain({

llm,

prompt,

outputParser: new StringOutputParser(),

})

const retrievedDocs = await retriever.getRelevantDocuments("what is task decomposition")

const r = await ragChain.invoke({

question: "列出名字和联系方式",

context: retrievedDocs,

})

console.log(r);

}

main();